The four-pillar AI Measurement Framework

The problem: lots of AI, no scoreboard

Budgets are growing. Pilots are launching. New tools appear every sprint. But without a structured way to evaluate all of it, you can't tell which initiatives are delivering real value and which are just generating activity. You can't decide what to scale, what to sunset, or where to double down.

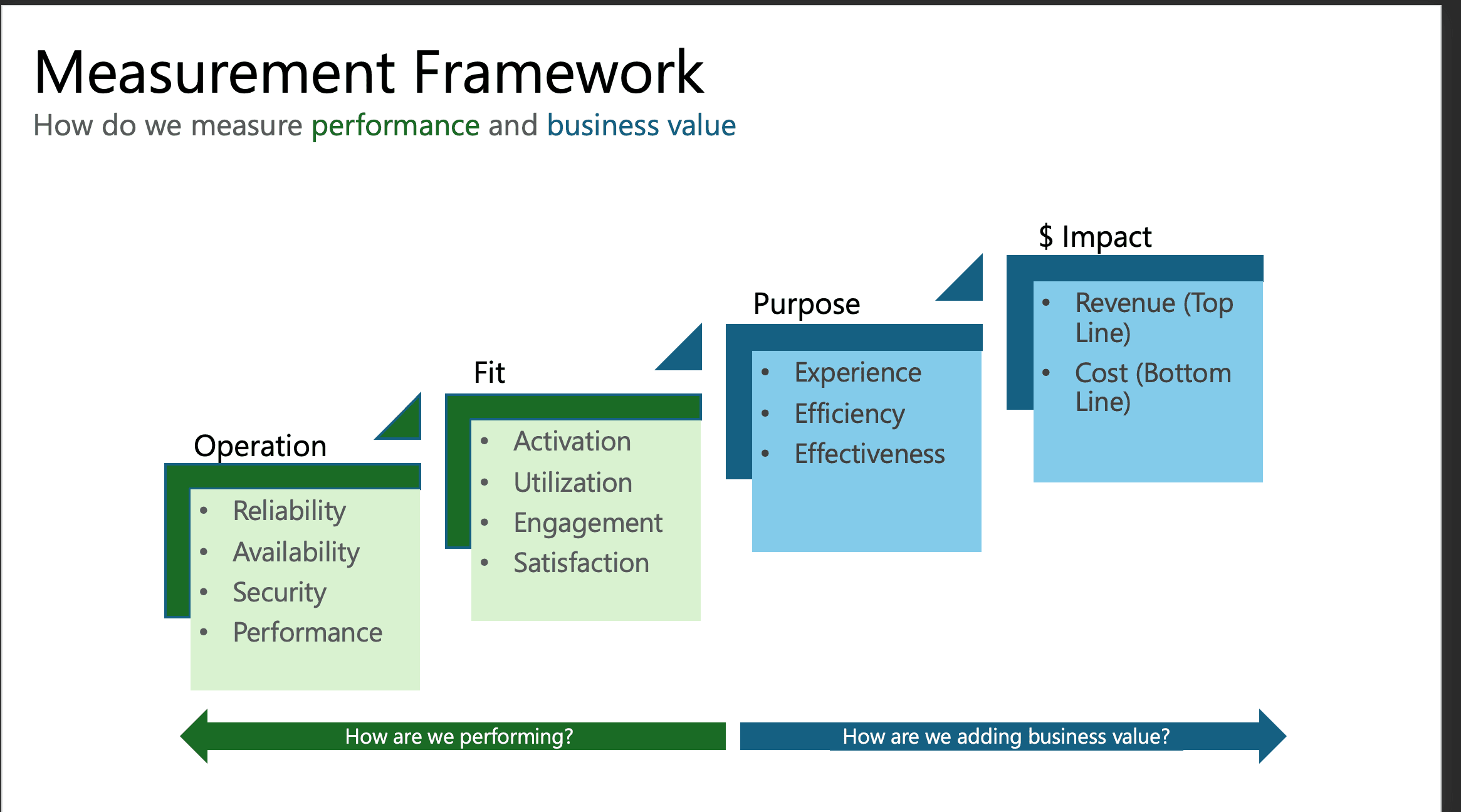

The framework below organizes measurement into four pillars that answer two questions: "How are we performing?" (Operation + Fit) and "How are we adding business value?" (Purpose + Impact).

Operation — Is it running well?

The foundation. If this is broken, nothing else matters.

Before you measure value, confirm the thing actually works.

Reliability

Accuracy, hallucination rate, consistency across queries, model drift

Availability

Uptime %, MTTR, API success rates — if it's down, the workflow stops

Security

Data exposure events, prompt injection surface, access control gaps

Performance

Latency (p50/p95/p99), throughput, cost per inference, token efficiency

Fit — Are people actually using it?

A tool nobody touches is shelfware, no matter how good it is.

You rolled it out to 500 people and 30 tried it? That's not an AI problem — that's an adoption problem.

Activation

What % of people with access have completed a first meaningful action?

Utilization

DAU/WAU, sessions per user, depth of feature usage — is it part of the workflow?

Engagement

Retention curves (D7/D30/D90). A curiosity spike that flatlines is a red flag.

Satisfaction

CSAT, NPS, qualitative feedback. Usage ≠ happiness — forced usage breeds workarounds.

Purpose — Is it making work better?

High usage of a useless tool is just expensive noise.

This is where you shift from "how are we performing" to "what business value is this creating."

Faster customer response times, less tedious data entry, better search — the tangible improvements people feel.

Actual time saved per task (not theoretical). Hours redirected from grunt work to high-value work.

Better outcomes, not just faster ones. Higher conversion rates, fewer errors, smarter decisions.

$ Impact — What's the financial return?

Translate everything above into the language the C-suite speaks.

Revenue (top line)

AI-driven recommendation engines boosting AOV, faster time-to-market for new products, conversion rate lifts. Not every initiative will move revenue — but when it does, quantify it.

Cost (bottom line)

Hours saved × loaded labor cost, reduced support volume, errors prevented. But don't forget the other side: licensing, compute, and maintenance costs. Net value is what matters.

How to actually use this

The four pillars form a progression. Don't skip ahead.

Capture baselines for the processes AI will affect. No baseline = no way to prove improvement.

Focus on Operation and Fit. Is it stable? Are people adopting? Fix these before measuring value.

Start measuring Purpose. Compare against baselines. Are tasks faster? Outcomes better?

Translate Purpose into $ Impact. Hours saved × cost, revenue uplift, total cost of ownership. Present as a portfolio view.

Use the scores to make decisions. Strong Fit but weak Purpose? Retrain. Strong Purpose but poor Impact? Too expensive. Low Fit? Fix adoption first.

Traps to avoid

- ⚠Measuring only what's easy. Usage stats are simple to pull. Business impact is hard. Most orgs stop at Fit and call it success — without ever confirming value.

- ⚠Demanding ROI on day one. Give initiatives time to stabilize and get adopted before demanding financial justification. Premature ROI asks kill good pilots.

- ⚠Ignoring the cost side. Saving 100 hours/month sounds great — until the tool costs more than those hours were worth. Always measure net value.

- ⚠Same KPIs for everything. A customer chatbot and an internal doc classifier need different metrics. The four pillars stay the same; the KPIs under each change.

The bottom line

The companies that win the AI era won't be the ones that adopt the most tools. They'll be the ones that know which tools are working, which aren't, and why. Operation, Fit, Purpose, Impact — four pillars, one shared scoreboard. That's how you turn AI investment from a leap of faith into a data-driven discipline.